Followers

Saturday, January 3, 2009

IE falls below 69% market share, Firefox climbs above 21%

Chicago (IL) – Microsoft was not able to slow the market share loss of its Internet Explorer (IE) web browser in December. IE surrendered more than 1.5 points in December, according to Net Applications, while Firefox, Chrome and Safari posted substantial gains. Over the past 12 months, IE has lost almost 8 points, leaving the browser with the least amount of market share since 1999.

Net Applications released updated global browser market share numbers today, indicating that IE is losing users at an accelerated pace. The browser’s share dropped from 69.77% in November to 68.15% in December. Most rivals were able to pick up a portion of what IE surrendered. Firefox gained more than half a point and ended up at 21.34%, Safari approaches the next big hurdle with 7.93% and Chrome came in at 1.04%, the first time Google was able to cross the 1% mark. Opera remained stable 0.71%, but it is clear that the Norwegian browser cannot attract any users IE loses.

Over the past 12 months, IE gave up 7.9 points of market share, while Firefox gained 4.5 points and Safari nearly 2.4 points. Chrome, released in September of this year, is an entirely new player and Opera was able to add 0.7 points to its usage share and remains just above Netscape, which is still listed at a slowly declining 0.57%.

As reported previously, the main reason for IE’s market share loss remains the installed base of corporate IE6 users (and some home users), which is where Microsoft’s browser is most vulnerable at this time. While IE7’s market share has not declined much (from about 45% during the week and about 50% on weekends in the beginning of the year to about 44% during the week to 48% on weekends at the end of the year) and IE8 Beta 1 and 2, released in March and August of 2008, have grown to 0.96% share, IE6 is declining rapidly and at a much faster pace than IE7 and IE8 are able to gain.

Since IE6 is used primarily within corporations, its market share is much higher during the week than it is on weekends. As a result, all other browsers gain on weekends and especially during a holiday. Because of that circumstance, Net Applications noted that the December numbers should be taken with a grain of salt. However, it is worth the note that IE6 achieved during the week market share numbers of about 28% during the week and about 21% on weekends in early 2008. In December, these numbers were down to about 20% during the week and 15% on weekends.

There are very few browser market share numbers available that would provide a credible and especially comparable indication how IE’s market share has evolved in the late 1990s and early 2000s. However, based on what we were able to dig up, there seems to be an agreement that IE5 was IE4 that took Microsoft to about 60% in early 1999 and IE5 (released in March 1999) lifted the browser’s market share above 70% by early 2000.

The market share loss of IE6 is a problem Microsoft will have to address soon, if the company remains serious about the browser market, especially if it intends to fine tune the browser to work well with its cloud operating system Windows Azure. As far as we can see right now, IE8 will not be able to stop the bleeding, as it follows the same design ideas Microsoft has had in place with previous browsers. While Apple, Mozilla and Chrome have found effective ways to quickly transition their users from an older browser to a newer software, Microsoft is clearly struggling to move users from IE6 to a more recent version.

For example, Firefox 2.0 usage share has been declining consistently over the past six months, from about 17% in the beginning of June to currently about 3.3%. In the same time, Firefox 3.0 climbed from less than 1% into the 18-19% range.

Original here

PlayStation 3 used to hack SSL, Xbox used to play Boogie Bunnies

Between the juvenile delinquent hordes of PlayStation Home and some lackluster holiday figures, the PlayStation has been sort of a bummer lately, for reasons that have nothing to do with its raison d'etre -- gaming. That doesn't mean that the machine is anything less than a powerhouse -- as was made clear today when a group of hackers announced that they'd beaten SSL, using a cluster of 200 PS3s. By exploiting a flaw in the MD5 cryptographic algorithm (used in certain digital signatures and certificates), the group managed to create a rogue Certification Authority (CA) which allows them to create their own SSL certificates -- meaning those authenticated web sites you're visiting could be counterfeit, and you'd have no way of knowing. Sure, this is all pretty obscure stuff, and the kids who managed the hack said it would take others at least six months to replicate the procedure, but eventually vendors are going to have to upgrade all their CAs to use a more robust algorithm. It is assumed that the Wii could perform the operation just as well, if the hackers had enough room to spread out all their Balance Boards.

Original here

MD5 collision creates rogue Certificate Authority

At the 25th Chaos Communication Congress (CCC) today, researchers will reveal how they utilized a collision attack against the MD5 algorithm to create a rogue certificate authority. This is pretty big news, so read on.

When you make a secured connection to a website via HTTPS, a public key certificate is sent from the server to your computer. This certificate contains a digital signature which your computer uses to verify the identify of the site to which you’re connecting. Certificates are “signed” by a Certificate Authority (CA), which acts as a kind of middle-man: you trust the CA, so you can trust the certificates signed by the CA. Anyone can create a certificate authority, though, so most browsers have a list of known reputable and trustworthy CAs. When your computer gets a certificate from a server, your browser checks the CA that issued it to determine whether the CA is trustworthy. If the CA is trustworthy, your browser assumes that the certificate being presented is trustworthy.

The public key cryptography utilized by Certificate Authorities is evolving, as are most things in the technology world. Some CAs used the MD5 algorithm to compute the digital signatures for certificates. MD5 has been known for some time to be weak against collision attacks, but running a CA is a pretty complex operation, so the entities behind them are slow to change.

Researchers attacked the MD5 algorithm using 200 PlayStation 3 systems and were able to construct a bogus Certificate Authority that looks like a known trusted CA. What this means is that these guys could generate a certificate for www.amazon.com which, when presented to your browser, would be accepted as the real thing. The digital signature on the fake certificate is listed as coming from a supposedly reputable CA, so your browser happily accepts it, reassuringly showing you the little padlock icon.

Okay, so how does this affect you? If the researchers’ results can be duplicated by a malicious agent, they could generate any number of certificates that would be trusted by browsers all around the world. This alone might be sufficient, though this attack could be coupled with a sophisticated DNS attack to make it really really really hard for anyone to realize that they’d been suckered. Your browser would report that you’re at yourbank.com; your browser would report that you were using HTTPS to protect the connection; and your browser would report that the SSL certificate being used for that HTTPS connection really did belong to yourbank.com. Granted, the level of effort required to perform such an attack is currently enormous, and the potential gains are probably limited, so it’s likely not the kind of thing that would be pulled on average Internet users. But it’s still something about which to be concerned.

The attack outline states “[w]ith optimizations the attack might be done for $2000 on Amazon EC2 in 1 day.” Thankfully, the researchers are not releasing their specific implementation. That’s somewhat reassuring, but expect conniving folks somewhere to try to recreate the researchers’ results for less academic purposes.

The PDF concludes with this: “No need to panic, the Internet is not completely broken” and assures us that the “affected CAs are switching to SHA-1″. SHA-1 is believed to be weak against certain attacks, though, so it might be better for the vulnerable CAs to jump right to SHA-2 or SHA-3.

Bottom line: as always, be cognizant of your browsing habits. If something looks or feels fishy, don’t provide any account names or passwords. Use different passwords for different websites, so that if you do get suckered by a phishing attack the phishers don’t get the keys to your online kingdom.

Original hereTop 10 Application Development Stories of 2008

Cloud development platforms, mobile application development and the increasing acceptance of dynamic languages for Web development were among the top 10 stories in the world of software programming. With each passing year, software tools have become more sophisticated. While developers have more languages and tools to choose from than ever before.

1. Cloud development platforms bloom

Google App Engine, Microsoft Windows Azure, Amazon, Salesforce.com and others have entered into the cloud space in force. What must developers do to program to the cloud?

2. Microsoft gets serious about software modeling

Microsoft releases its "Oslo" modeling strategy, joins the Object Modeling Group and pledges support for UML. Microsoft long held an indifferent if not hostile view of the Unified Modeling Language, but has now done an about face and is supporting modeling big time, and supporting UML in the Visual Studio 2010 toolset.

3. Mobile app development gets huge

Android, Windows Mobile, iPhone, BlackBerry, Symbian, name your platform. Mobile app development is where the action is. The next step is making it easier to build apps that run on more than one platform.

4. Dynamic languages take off

Ruby, PHP, JavaScript, Python, et al, see mainstream use. Ruby is used in all kinds of social networking and Web 2.0 environments, however, taking shots for not being as scalable as some other languages. Meanwhile, PHP, Python and others see their use on the rise in the enterprise.

5. ECMAScript (JavaScript) 4 is tabled

ECMA was on track to release the next major version of the ECMAScript specification, but several members of the core working group looking at the issue said let's slow down and make things less complicated. ECMAScript is the standards embodiment of JavaScript, which is the lifeblood of browsers. Tabling ECMAScript 4 means companies like Microsoft can have more time to implement new standards into their browsers.

6. Multicore processors put pressure on application developers

With the advent of multicore systems, developers are being forced to write applications that support them. It means developers essentially have to rethink their development strategies and gear up for parallel environments. Companies such as Microsoft, Intel, IBM, Sun and others are looking at the issue.

7. Microsoft/Adobe rivalry heat up

With new versions of Silverlight and WPF, and Adobe Flash, AIR and "Thermo," Microsoft continues to encroach on Adobe's turf in the rich Internet application (RIA) space with Silverlight 2 and Windows Presentation Foundation. And into the designer/developer workflow arena with Microsoft Expression. However, Adobe continues to innovate, delivering Flash Player 10, a new version of Adobe AIR and its new "Thermo" design tool. Meanwhile, Sun enters the fray with JavaFX.

8. Planting the seeds of 'development as a service'

The Basecamp guys, 37 Signals, do a great job, but there's also Heroku, Bungee Connect and a few others: They've all done special cases of development or team collaboration. If someone were to come in and combine them all, it could be a pretty good (and modern) competitor to Visual Studio and WebSphere. It certainly portends a direction the industry should be taking toward hosted rather than on-premises servers.

9. OSGi (Open Services Gateway initiative) makes a big splash

Eclipse, NetBeans, the Spring Framework, Apache and others are looking to OSGi as the future of their Java deployment environments. Others see OSGi not only for deployment but for its programming model, which is starting to encroach on Java EE APIs.

10. The Spring Framework wins converts

Spring has become a leading player in enterprise Java because it helps to simplify development as opposed to Enterprise JavaBeans (EJBs) and J2EE (Java 2 Platform, Enterprise Edition) or Java EE (Java Enterprise Edition).

Electronics Recycling for the New Year

by Maura Grunlund / Staten Island Advance

If you were gifted with a new computer or other electronics and you're thinking of cleaning house for the New Year you might want to consider recycling those items.

Electronic garbage is becoming one of America's fastest growing problems. E-waste is estimated at four percent of the US municipal solid waste and growing at an accelerated rate, according to the US Environmental Protection Agency and Fast-teks computer service company.

On Staten Island and New York City as a whole we are permitted to put electronics out with the trash until July 1, 2010. However, reusing and recycling are ways to keep hazardous materials out of our landfills and, ultimately, our environment.

Many major manufacturers and retailers have take-back programs for used computers and other electronics. The city Department of Sanitation used to have special recycling events for these items but so far hasn't scheduled any for 2009 due to budget cuts.

Equipment that is less than five years old often may be refurbished and donated to non-profit organizations.

Before tossing or recycling a computer remove all personal and business information. If you're donating make sure all the accessories such as monitor, keyboard, mouse and software packages are included. Keep a record of your donation since it is tax deductible.

Original hereWindows 7 surprise: DivX built in

No more trawling the web for the latest media codecs. Windows 7 comes ready to play all your favourite "downloaded" videos.

One of the new features announced at the recent Windows 7 Reviewer’s Workshop in LA is that Windows 7 will natively support a number of popular media formats, so that users don’t have to worry about finding, installing and downloading third-party codecs.This is an evolution in media support which is similar to the inclusion of native MPEG-2 playback in Windows Vista, providing the DVD playback functionality which was missing in Windows XP.

It's an interesting change by Microsoft, which, in the past, has doggedly clung to the hope that Windows Media Video will end up as the prevailing video format for the internet. It appears to have finally conceded that the vast majority of people are watching downloaded stuff in DivX or Xvid -- possibly a realisation driven by the enormous amount of telemetry data it has collected from users of Vista that it never had access to through XP. It has stopped short of bundling Adobe Flash support into Windows, though, as it develops its own Silverlight technology.

It's an interesting change by Microsoft, which, in the past, has doggedly clung to the hope that Windows Media Video will end up as the prevailing video format for the internet. It appears to have finally conceded that the vast majority of people are watching downloaded stuff in DivX or Xvid -- possibly a realisation driven by the enormous amount of telemetry data it has collected from users of Vista that it never had access to through XP. It has stopped short of bundling Adobe Flash support into Windows, though, as it develops its own Silverlight technology.

Windows 7 will also support H.264 video and AAC audio. The support for AAC will be welcome news for people with music and video that has been encoded in Apple iTunes, as Windows 7 will be able to play all iTunes media through Windows Media Player.Unfortunately, this won't apply to media that has been purchased from Apple's iTunes store, because Windows 7 can't decode the Apple FairPlay DRM, which Apple refuses to license to anyone else.

The ability to play back these additional formats has implications for new Windows 7 services like libraries and networked media player support, as Windows 7 users can index and search across their iTunes media without needing to use iTunes as the default player, and can send a wider variety of media content to a centralized location.

A more subtle user benefit is that by not having to download third-party codec bundles (which is convenient in itself), users can minimise the inevitable build-up of unverified software running on their systems. Most major codecs are freely available, but you often need to install multiple disparate packages to get the widest possible support for digital media -- or run an 'all in one' CODEC installer which may also come bundled with hidden malware inside. Additionally, these CODEC packages can interfere with other, and the codecs are not necessarily optimised to run efficiently.

By bundling a wide variety of media formats into Windows 7, Microsoft has created an operating environment which negates the need for third-party codecs and should therefore run more stably and reliably. It also brings blanket support for the most popular online media formats, providing an environment in which users can start playing their favourite content immediately.

Original hereThe New Year Linux Resolution: Switching to Linux for a Week

Filed under Gear

The plan: Ring in the new year by switching over to Linux for a week, documenting each day of the transition.

The plan: Ring in the new year by switching over to Linux for a week, documenting each day of the transition.

Day One, Research and Installation.

Other days: Day Two

My impressions of the Linux operating system are coloured by memories of the first time my computer-whiz friend unveiled his sort-of-new copy of Redhat Linux to me. “Check this out!” he said. “This OS doesn’t suck like everything Microsoft makes!” It came in an over-sized jewel case with 4 CDs, handed down second-hand from another computer-whiz friend who recommended we try it.

Upon installing it we were greeted with an unceremonious command console that might as well have been written in the ancient tongue of the long-dead tribe of Gnitth Shhta Star-God worshippers. We had no idea what to do, and it was exciting. Linux had that combination of sparseness, functionality and seriousness that gave it the feel of being a real operating system, unlike that flighty Windows 95. In short, Linux seemed cool.

But that was my first and last encounter with Linux. In the ten or fifteen years since that first Linux install other operating systems have shown up, like XP and OSX, that have mostly pulled my attention away from Linux. Now my impression of Linux is bundled up with old memories of screwing around with the config.sys file on my DOS computer in order to allocate enough virtual memory to get Ultima running. In short, Linux to me has always been synonymous with “command console,” and although command consoles may work well, they definitely aren’t easy to use.

All these year later, now that those newer and simpler operating systems are available, I find myself wondering: why use Linux at all? Why go through all the trouble of installing an operating system that’s difficult to use, when almost everyone has a perfectly fine operating system already installed on their PC? I’ve never seen the reason to make the switch.

But I’ve also heard all the reports about how Linux is different nowadays. “It’s easy to use!” they say. “It’s even easy to install, and it’s way more stable than Windows!” they insist. “It’s not like the old days; Linux has changed, man! Just give a try, all the cool and smart and handsome people are using it!” Linux still has that indie cred that I experienced all those years ago that makes it seem just a little bit more elite than its competitors, and power-nerds everywhere seem to be cajoling me into trying it.

Lucky for them I have an incredibly weak will. So I’ve decided to give in to peer pressure, light me up some Linux, and trip my way through the alternative operating system carnival in the sky.

All open-source operating system programmers are required by law to look like scary hobo versions of Alan Moore (Credit: Russ Nelson)

Step one is to research what Linux has to offer nowadays. I know absolutely nothing about it, other than the fact that it is associated with penguins and guys with crazy beards, and that I remember it having all the subtlety and ease of use of a sledgehammer to the patience-center of your brain. But my plan is that I shouldn’t really need to know much of anything about it; if all the reports are true, and Linux is no longer the battleaxe it used to be, I should be able to head out and find the most user-friendly version of Linux on the market, pop it in and get all Linuxed up.

So where to start? From what I remember there are at least two or three version of Linux, so I’ll need to narrow down my choices. Unfortunately, my google search for “linux os that doesn’t suck” doesn’t turn anything up, so I’ll have to turn to the Internet user’s best friend: Wikipedia. A quick Wiki search reveals that there is actually a few more than two or three Linux builds; in reality there is roughly 158,000 million types of Linux, each of them named after a different type of hat.

Ten-gallon Linux sounded a bit old-fashioned, and Beret Linux really looked too pretentious, so I made my choice to try the decidedly un-hat-like Ubuntu on for size.

At the Ubuntu site I found a cute logo that looks kind of like a red, yellow and orange gun barrel pointing at my eyes. Later on, while eating my lunch, I would realize that it was actually representative of three people holding hands, presumably to keep each other from running away to a Mac or XP operating system.

My goal is to do this as painlessly as possible, so I hurriedly look for a copy of the OS and blissfully ignore anything that looks like a guide or set of instructions. I find a download location, and it turns out the downloading things is pretty easy. (You click on the button that says “download.”) So that’s one point for Ubuntu; good job on making use of basic http protocol, Ubuntu!

The file downloads quite quickly given its size, and a little bit later I’m ready to go. The file is an .iso, so I burn it to a CD, pop it into my drive and reboot.

I’m greeted by a colourful and clear menu, which gives me a series of options for installing. One of them is to try Ubuntu without installing, which is a clever idea for the creators to include, but I decide not to opt for it; my plan is to install Linux as an alternative to Windows and use it consistently, so there’s no point in trying it just yet when I will presumably have it installed in its entirety soon.

So I opt for the full install option. Since I want to keep Windows intact, because it has all kinds of Windowsy things I need, I am going to install Ubuntu on an external hard drive, which I’ve already connected to my computer. Next I select the full install option, after which I am greeted with an earthy-looking background and am serenaded with a truly bitching drum solo. I figure this will probably take a while, so I leave the room to marinate a steak for supper (with garlic, onion and horseradish if you must know.)

As I return I realize I’m actually pretty excited to get this thing installed and try it out. Gleefully I hop into my room to find… it’s locked up. The mouse won’t respond and the screen is stuck in a desktop with a beige background.

Ubuntu Linux probably won't shoot you in the face

So much for the simple install. With the latest development I abandon my bull-headed approach and decide to get some help. Luckily the support forums on Ubuntu’s site have a thread that looks like it addresses my problem. According to the forums it looks like I have to press F4 at the install menu and enter graphic safe-mode; either that or do something with an alternative install CD that I really don’t want to deal with.

I heed the advice about the safe-mode, the installer doesn’t lock up this time and I’m grooving to sick bongo beats once again. I follow the dialogue box, select what I think is my external hard-drive to install on, enter some more basic information, experience a moment of powerful apprehension and potent dread that I might have picked the wrong drive to install on and might end up screwing up my Windows drive, press back a whole bunch, then finally build up the guts to go through with it.

The install process takes about half an hour, during which time I cook up my well-marinated steak (it was delicious, thank you.) I restart my computer and I’m feeling that excitement and wonderment again that I felt all those years ago in those heady days when me and my buddy first experimented with alternative installs. Then my computer starts to boot and… it locks up.

“Damn,” I think, “Something must have gone wrong with the install, which I did on my external hard-drive so that it would be completely separate from my Windows hard-drive so I wouldn’t have to worry about anything.”

Disappointed that I’ve run into another road-block and won’t get to use Linux just yet, I unplug my external hard-drive so I can boot into Windows and go to the support forums for more advice and… my computer locks up. It tells me that GRUB is loading, and to please wait, and also that Error 21, which is presumably the Linux-talk equivalent of two middle fingers and a crotch-thrust in my direction.

Now I’m super-screwed; the computer I use everyday has somehow gotten a whiff of the aromatic Linux that I was installing on my external hard-drive and is now throwing a hissy fit and not talking to me any more. I ask my roommate if I can use his computer, log on to the Ubuntu support forums once again, and post a thread: Subject: AHHHHHHHHHHHHHHH, Body: AHHHHHHHHHHHHHHHH OH GOD OH GOD OH GOD.

Luckily the Ubuntu forum staff are able to interpret my well-considered communication and they inform me that I need to boot from a Windows XP install CD to repair the boot-sector of my XP drive.

Success! My computer is un-ruined. But I’ve had enough excitement for one day, and decide to call it. The forum staff explain to me that they can tell me how to set up Ubuntu on my external hard drive so that it works properly, so tomorrow I’ll take another swing at it.

To put it softly, installing Ubuntu was hell. I ran into more problems than I ever imagined I would, and for a moment I thought my computer was reduced to a pretty silicon and plastic paperweight. The simplicity I was looking for was not there, and I’m not exactly planning to recommend that my parents replace their Mac OS with Ubuntu any time soon, given that they would probably have given up when they couldn’t figure out what an .iso was.

Nonetheless, I’m willing to give Linux the benefit of the doubt; I imagine that the majority of users don’t encounter the sort of problems I have, and I’m willing to concede that my hardware is likely to blame for all the peculiar issues. And while it wasn’t an easy process, the Ubuntu forum staff were very helpful and I was able to solve all my problems fairly quickly. Thumbs up for the support!

So tune in tomorrow, when I put the install problems behind me and move on to testing Ubuntu for the first time!

Other days: Day TwoOriginal here

Mars rovers roll on to five years

The rovers keep on rolling across the dusty surface The US space agency's (Nasa) Mars rovers are celebrating a remarkable five years on the Red Planet. The first robot, named Spirit, landed on 3 January, 2004, followed by its twin, Opportunity, 21 days later. It was hoped the robots would work for at least three months; but their longevity in the freezing Martian conditions has surprised everyone. The rovers' data has revealed much about the history of water at Mars' equator billions of years ago.

"We realise that a major rover component on either vehicle could fail at any time and end a mission with no advance notice, but on the other hand, we could accomplish the equivalent duration of four more prime missions on each rover in the year ahead." Spirit is exploring a 150km-wide bowl-shaped depression known as Gusev Crater. It has found an abundance of rocks and soils bearing evidence of extensive exposure to water. Opportunity is on the other side of the planet, in a flat region known as Meridiani Planum.

Its data has shown conclusively that Mars sustained liquid water on its surface. The sedimentary rocks at its study location were laid down under gently flowing surface water. The rovers are now showing some serious signs of wear and tear. Spirit has to drive backwards everywhere it goes because of a jammed wheel; and Opportunity's robotic arm has a glitch in a shoulder joint because of a broken electrical wire. There have been times also when the vehicles' have been dangerously short on power because of the dust covering on their solar panels.  The vehicles continue to return breathtaking panoramas When Spirit and Opportunity do eventually fail, Nasa will have to wait awhile for its next surface mission. It recently delayed this year's planned launch to 2011 of a much more capable vehicle, known as the Mars Science Laboratory (MSL). The rover project has been beset by technical and budgetary problems. The decision was taken not long after Europe also put back its rover venture known as ExoMars. Officials cited cost concerns. It is likely all surface missions in future for Nasa and the European Space Agency will be joint affairs because of the high cost of getting spacecraft down on to the planet. Nasa lost contact with its static Phoenix lander in November. It was operating in much more difficult conditions at a high-latitude location.  | ||

Spotify, An Alternative to Music Piracy

The music industry has taken some extreme measures to counter piracy, but it hasn’t found the silver bullet yet. The key is to come up with a service that will fulfill the needs of music lovers, and one that would even be embraced by the most hardcore pirate. With Spotify, this might just become possible.

Spotify is a music service that gives users access to a huge library of music, through a lightweight application that looks like a mashup of the best parts of iTunes and Last.fm. Music is streamed, partly supported by P2P technology, but it plays instantly, like we’ve never seen before.

Spotify is a music service that gives users access to a huge library of music, through a lightweight application that looks like a mashup of the best parts of iTunes and Last.fm. Music is streamed, partly supported by P2P technology, but it plays instantly, like we’ve never seen before.

One of the software engineers at Spotify is Ludvig Strigeus, the creator of uTorrent. It is therefore no surprise that the application uses very few resources, just 12k memory when we tested it. The rumor goes that some of the money made when uTorrent sold to BitTorrent Inc., has actually been invested in Spotify, an application that competes with piracy.

When we asked Andres Sehr of Spotify to describe the service, he told us “Spotify is a new way of enjoying music. We believe Spotify provides a viable alternative to music piracy. We think the way forward is to create a service better than piracy, thereby converting users into a legal, sustainable alternative which also enriches the total music experience.”

The quality of the music on Spotify is comparable to 160kbps MP3s, which is more than decent for a streaming application. To fill its library, Spotify has cut deals with EMI, Warner Music, Sony BMG and three other major labels, which all responded positively to the new concept. Interestingly, Spotify also uses P2P technology to stream the more frequently accessed tracks.

“Spotify uses a hybrid p2p system where music is delivered both by our servers and using P2P,” Andres Sehr said. “This allows us to deliver the long tail of music which may not be very popular, as well as quickly serve up the latest hits that the majority of users listen to. P2P allows us to both increase the speed that we deliver music and also lower the cost of streaming it.”

Aside from being a music streaming application, Spotify also allows users to create and share playlists with each other, the top 100 tracks of 2008 according to Pitchfork editors for example. On top of that, the Spotify interface helps you to discover new artists with its “similar artists” and “artist radio” feature.

The overall response from Spotify users seems to be very positive, but can it compete with piracy? Time will have to tell, but Spotify invites are actively being traded within the BitTorrent community, and it has even been well received on some of the most elite music trackers.

One user at the music tracker What.cd wrote: “Honestly it’s going to be huge. I’ve been browsing and playing from its seemingly endless music catalogue all afternoon, it loads as if it’s playing from local files, so fast, so easy. If it’s this great in such early beta stages then I can’t imagine where its going. I feel like buying another laptop to have permanently rigged.”

Spotify is not perfect though. One of the mentioned downsides is that it is not compatible with iPods and other portable MP3 players. The Spotify team hasn’t ruled out the option of an iPod compatible version in the future, but for now they will focus on optimizing the Windows and Mac application.

Overall we can conclude that Spotify definitely has potential, but time will tell if it’s able to compete successfully with piracy. Spotify is currently in Beta stage, invites to the free (ad-supported) version can only be used in the UK, Sweden, Finland, Norway, Spain and France, but restrictions usually don’t stop pirates.

Update: We have a few invites left, but sending them all out takes up a LOT of time. We’ll update this post in a few hours when we’re ready to send out more. According to some of the commentary, an invite is not even needed.

Video: What is Spotify?

More Colleges Expected to Offer Online Interviews

Nelson Brunsting of Wake Forest University demonstrates how the North Carolina college uses webcam technology to interview prospective students. (Wake Forest University Via Associated Press)

RALEIGH, N.C. -- For her college interview, Avery Cullinan put on her best outfit but didn't bother with shoes. She sat in her living room, smiled into her computer's webcam and told an admissions officer more than 800 miles away that Wake Forest University was right for her.

"It's hard to part with money for a half-hour interview," said Cullinan, who avoided a costly trip from her home in Newburyport, Mass., to Winston-Salem, N.C., by using the pilot program at Wake Forest. She was later accepted.

The online interview was part of a push that started in May at the university. Admissions director Martha Allman said she eventually wants to give each applicant -- more than 9,000 of them each year -- a more individualized review before inviting them to Winston-Salem as part of the university's 1,200-student freshman class.

Although a new process at the undergraduate level, webcam technology has been used for years by at least a dozen graduate programs -- including Pennsylvania State University, the University of Georgia and Arizona State University -- to interview prospective students.

David Hawkins, public policy director for the National Association for College Admission Counseling, said he expects the trend to grow.

"Looking ahead, colleges will try to pursue the kind of technology that will create a personal approach to the admission process," said Hawkins, noting that he was not aware of any school other than Wake Forest offering webcam interviews to undergraduate applicants.

But while colleges and universities have increased their online outreach to a generation raised on the Internet, there are still logistical hurdles to webcam interviews. The most notable, Hawkins said, is that financially strapped students may not have the easiest access to a computer.

"There are some limitations to it," he said. "The technology is still not as widely available in order to make it effective."

But Carrie Marcinkevage, director of MBA admissions at Pennsylvania State's Smeal College of Business, where webcam interviews have been offered for three years, said colleges' resistance to change is a bigger issue than students' lack of Internet access.

She said her program's applicants are offered several options for online interviews, including Skype Internet telephone service and Yahoo, AOL or Google instant messaging. All are free or relatively inexpensive, she said.

"It's literally a matter of speaking their language. I don't think it's the students. It's the unfamiliarity of the staff that doesn't know how to use it," said Marcinkevage, cautioning that the virtual world should not replace an actual campus visit when students make their final choice.

After a successful round of Web-based interviews in the early admission process, Wake Forest offered the program to its entire undergraduate applicant pool -- a decision that doubled the number of requests for such interviews.

"We decided this would be a wonderful alternative to the face-to-face interview," Allman said. "We have to stay attuned to how students receive information and how they communicate."

Cullinan, who used her own computer to interview with Wake Forest, said most students can at least borrow Internet access from family, friends or even the local library.

"If you really need to, there are ways to do it," she said.

8020 Media to Shut Down

In a fresh sign of the economic turmoil hitting publishing and the Internet, 8020 Media, the company behind the reader-generated photography journal JPG Magazine, is shutting down.

The company, which described itself as a “revolutionary new hybrid media company,” will announce the move to its community of readers and contributors on Friday morning, according to Mitchell Fox, 8020’s chief executive.

“In the face of these extraordinary economic times, in a devastated advertising climate, we can no longer continue to operate the business due to lack of funds, and hence we have to close 8020 Media effective immediately,” Mr. Fox wrote in a letter he said he was sending to a few friends of the company.

8020 Media was backed by Halsey Minor, the founder of CNet Networks, and located in the San Francisco office of his investing firm, Minor Ventures. It had an unusual, low-cost editorial structure that many media and technology pundits pointed to as a model for the future. JPG Magazine had a small staff but solicited contributions from amateur photographers and asked its community of readers and contributors to vote for the best pictures, which then made it into a bimonthly print magazine. The company’s similar travel magazine, Everywhere, ceased publication in August.

JPG had a circulation of around 50,000 and had recently secured some prominent space on newsstands around the country.

But ultimately the money ran out, and Mr. Minor declined to invest more, according to a person with knowledge of the situation. 8020 was attempting to either raise more money from other investors or to sell itself to big media names, including the Meredith Corporation and Conde Nast, but with no success. Mr. Minor could not be reached for comment on Thursday.

The 18 employees who worked for 8020 were given the holiday week off. On Tuesday, they received individual telephone calls and e-mail messages telling them that the company had exhausted its options and was shutting down.

Here is the full text of Mitchell Fox’s note to 8020’s friends:

In the face of these extraordinary economic times, in a devastated advertising climate, we can no longer continue to operate the business due to lack of funds, and hence we have to close 8020 Media effective immediately.

There is no doubt that our company has done what no others have yet to do…that is, prove that the web and print can work effectively together, one supporting the other.

We’ve also proven that community generated media CAN be a powerful thing…and it can create spectacular media.

The riddle of having a sound web platform support that drives interactivity with a print product has been solved, however, none of us could have predicted the global economic collapse we’ve witnessed in the past few months. So our timing to grow the business and bring it to profitability through even the smallest amount of additional funding could not have been worse.

So, while we sit here at the precipice of profitability, the negative marketplace forces are too strong to overcome, and we must take this regrettable action.

It remains undeniable that the publishing industry MUST find a new model, and mass collaboration and participation in the media property is certainly now proven it can be the foundation of this new model (NOTE: This is NOT citizen journalism).

We’ve cracked the code on marshaling a community around a media property online and in print….and helping them become active , loyal, and engaged participants in both.

We do owe a debt of thanks to Minor Ventures for believing in us, and funding us to this point and to have even given us a chance to make this business successful, and for that confidence we’ll always be grateful.Original here

Yahoo, Intel have high hopes for Internet TV

(CNET) -- Yahoo and Intel built their success upon widespread use of personal computers, but the two companies hope products to be shown at next week's Consumer Electronics Show will mark the beginning of their Internet-fueled expansion to the world of TV as well.

Yahoo and Intel hope next week's CES show will mark the beginning of their expansion to the world of TV.

The two companies have attracted several significant manufacturing and content allies in the attempt to bring new smarts and interactivity to a part of the electronics world that has remained a more passive part of people's digital lives.

Intel and Yahoo showed off Net-enabled TV prototypes in August, but the companies' technology will be presented in more finished form at the electronics show within products by Samsung, Toshiba, and a number of new partners that have signed on since the debut.

What exactly are they trying to achieve? For Yahoo, it's establishment of the Widget Channel, a software foundation that can house programs for browsing photos, using the Internet's abundant socially connected services, watching YouTube videos, or digging deeper into TV shows -- and through which Yahoo will be able to show advertisements.

For Intel, it's a foothold in an industry whose microprocessors have typically been cheaper, less powerful, and less power-hungry.

Yahoo is confident the products will catch on, in part because it's set "very low" licensing requirements, said Patrick Barry, vice president of Yahoo's Connected TV initiative.

"We do not see it as a niche offering in a few high-end models. We see this as moving into the mainstream. In 2009 we're going to see good penetration into the product lineups of the consumer electronics companies," Barry said. "Beginning in 2010, I think, you're going to see Internet-connected consumer electronics devices dominating the lineup."

But for both companies, TVs are terra incognita. "We emerged from the ocean of the PC," Barry said.

An anthropologist's view

Despite years of effort, the idea to put media-centric PCs in the living room hasn't caught on widely. But Intel, stung by its poorly received Viiv brand, has been taking the challenge seriously.

It even dispatched its top anthropologist--yes, the chipmaker employs anthropologists--to carefully study how people use TVs. In other words, Intel is trying to adapt to reality, not foist its ideas on an unwilling market.

Some people like to watch TV, but anthropologist Genevieve Bell, director of user experience for Intel, likes to watch people watching TV. Specifically, Intel concluded that unlike the PC, TVs are social. People watch it together, and what they watch turns into what they talk about. Another difference from PCs: it must be simple and reliable, she said.

When bringing the Internet to the TV, "You couldn't just turn it into a PC," she said.

And it's pretty obvious why those not in the TV market would be angling for a piece of the action. People in the U.S. spend about 5 times more time watching TV than using a computer, Bell said. Globally, it's a factor of 25; unusually, the TV and PC time is at parity in Israel, perhaps because of communication habits, she added.

More ads

For decades, people have been accustomed to advertising-supported television. The Widget Channel technology opens up some new horizons for Yahoo, though Barry said the company isn't going to rush to plaster sponsorships over the new interface.

"We have a lot of support from the advertising community, but we're focused on the consumer now," Barry said. "What you'll see initially is us trying to fall all over ourselves trying to make the consumer happy. The advertisers understand that." He wouldn't comment on when advertising will be launched with the technology.

Although Yahoo will eventually show ads, it won't have a lock on them. Barry said: "We are not going to be locking down anything from a walled garden perspective, including monetization. We get a nice advantage, knowing the ins and outs, but we will not limit the platform to being addressable by us."

There are many opportunities for ads, including the dock that can be shown across the bottom of the TV screen and in pages that fill the screen.

The Widget Channel technology is based on the Widget Engine software Yahoo got in 2005 with its acquisition of Konfabulator, and it lets programmers write a wide variety of applications.

Course corrections

Intel learned from initial testing of the TV technology, Bell said. For one thing, the company found that people didn't like the Widget Channel controls appearing on the left edge of the screen, one option the companies had demonstrated. Instead, people prefer the bottom, where they're accustomed to seeing text already.

For another, she said, people expressed a powerful desire for a big button to make the software go away in one fell swoop--no menus or arrow keys or complication--so they could get back to watching TV when they wanted. That big button is also used to activate the Widget Channel.

And nobody wanted yet another remote control.

To help chart its long-term course, Intel gauged consumer sentiment in part by asking what people thought the future of TV would look like. People's answers generally fit into a few categories:

• Something that would provide relevant information in real time, such as the weather right before heading to a sporting event.

• Something that would connect them to other people they care about, a variation of social networking.

• Something that would let them participate more with what they're watching, for example by figuring out where a show's cast members already had acted, or finding, rating, and sorting content.

Few, though, wanted a full-on Web browser, nor a keyboard to clutter up the room.

Yahoo sees the same fallow ground as Intel in the market.

TV innovations that have succeeded focused on screen size, image fidelity, and flat-screen technology, Barry said. "But the consumer electronics industry has not really explored the...connectivity that the Internet provides."Original here

Company Offers Lifetime Anonymous BitTorrent For $50.00

In these harsh economic times, everyone is looking out for a bargain. A new VPN service launched recently offering a lifetime of anonymous BitTorrent, completely unlimited, for a one-off $50.00 payment. Sounds good? We take a closer look to see if the numbers stack up.

During the last year there has been a surge in businesses offering VPN (Virtual Private Network) services to those who prefer to operate with a degree of anonymity on the Internet. A VPN service assigns your PC with a different IP address to your regular one, making it much more difficult for people to identify you on the Internet. A VPN service could also help you access blocked websites or services such as BitTorrent or Skype, and offer security while accessing the Internet via public hotspots.

During the last year there has been a surge in businesses offering VPN (Virtual Private Network) services to those who prefer to operate with a degree of anonymity on the Internet. A VPN service assigns your PC with a different IP address to your regular one, making it much more difficult for people to identify you on the Internet. A VPN service could also help you access blocked websites or services such as BitTorrent or Skype, and offer security while accessing the Internet via public hotspots.

A good VPN service offering unlimited data transfers and healthy speeds usually costs around $10 to $20 per month, so when a new service launched this week, offering all this for a one-off payment of $29.00 (introductory price), it warranted further investigation.

According to their website, the people behind VPN4Life are entrepreneurs “striving to free the world from ISP monitoring, government restrictions, and capitalism’s growing influence on the Internet, one account at a time.” Offering unlimited bandwidth and 128 bit encryption through servers in the UK, Germany and Singapore with a 99.7% uptime guarantee, it certainly looked attractive. The official site carries little detail, so we contacted VPN4Life and asked a number of questions.

First of all, the $29.00 payment looked like an introductory offer, so how much would the service cost normally? VPN4Life told us the 20mb/sec fully BitTorrent compatible unlimited bandwdith PPTP service would cost “between $45 and $50″, while confirming that the payment is indeed a one-off for a lifetime subscription.

Since there is no privacy policy on the site we asked a few questions along those lines. VPN4Life told us that they do not log what any of their customers do. We asked about the lack of a displayed Terms of Service and their response was it wasn’t needed. “Customer pays, we provide VPN,” they told us, while assuring that they would never divulge any customer information to 3rd parties, since they have nothing stored to give them.

$50.00 for life sounds an amazing offer - but is this super-low price sustainable? The immediate difficulty with a lifetime subscription is that once off the ground, the company is then responsible for providing a service to thousands of members forever who paid very little in the first place. More and more new signups are then required to pay for the spiraling hardware and bandwidth costs and since VPN4Life offer unlimited bandwidth, it’s difficult to see how the whole operation can be sustained.

As far as the real costs of bandwidth go, we spoke with Bruce at VPN provider Perfect Privacy who told us: “There is a reason why we currently charge about EUR 10.00 to EUR 15.00/month (depending if you pay for 3 or 24 months in advance), namely that 1 mbps of dedicated bandwidth in the West costs about EUR 10.00 to US$ 15.00 at the very minimum. In Asia it costs about US$ 80.00/mbps. That’s US$ 1,500 (U.S/Europe) to US$ 8,000 (Asia) every month just for 100 mbps.”

In the face of these figures, the VPN4Life offer starts to look vulnerable indeed. “How are they going to pay for their ever increasing bandwidth needs if the number of paying members becomes ever smaller in relation to the total number of members?” asked Bruce, rhetorically. He has a very, very good point. It looks impossible, much like the classic pyramid scheme.

Some might feel that at $50.00 this service is worth a try but I strongly believe that if something looks too good to be true, then it probably is. Time will tell, but I won’t be changing provider, that’s certain.

Original hereAndroid netbooks on their way, likely by 2010

[Update: Since posting this story, we've had a lot of inquiries from readers, with questions ranging from whether Android is ready for laptops and full-scale PCs, why Android can't rely fully on Linux, and so on. See our follow-up Android FAQ post.]

The image above shows a netbook Asus EEEPC 1000H running on Google’s mobile operating system Android. Huh? You thought Android was for mobile phones, right? Well, as we’ve written before, Google is planning to use Android for any device — not just the mobile phones.

Besides writing as freelancers for VentureBeat, we also run a startup called Mobile-facts. It took us about four hours of work to compile Android for the netbook. Having done so, we (Daniel Hartmann, that is) got the netbook fully up and running on it, with nearly all of the necessary hardware you’d want (including graphics, sound and the wireless card for internet) running. See the images below for further impressions.

Here’s the significance: Imagine the billion dollar market at stake here if Google can make good on this vision. Netbooks are basically small-scale PCs. For Silicon Valley myriad of software companies, it means a well-backed, open operating system that is open and ripe for exploitation for building upon. Now think of Chrome, Google’s web browser, and the richness it allows developers to build into the browser’s relationship with the desktop — all of this could usher in a new wave of more sophisticated web applications, cheaper and more dynamic to use. Ramifications abound: What does it mean for the stock price of Microsoft? Microsoft currently owns the vast majority of the desktop operating system market share? In recent weeks, Microsoft’s Steve Ballmer repeatedly dismissed Android as competition to Windows Mobile.

Back to our experience in compiling Android for the Asus netbooks. It shows us that there is a big technology push to let Android run on netbooks under way.

Based on the progress we see in the Android open source project, we believe that getting an Android netbook to market is doable in as few as three months. Of course, the timing depends as much on decisions by the partners in Google’s OHA alliance and other developers contributing to Android, as it does on Google itself. It is these partners — including device makers and carriers — who decide how and when to adopt Android for different devices and markets. As we note below, Intel is one such contributor working on the adoption of Android to a notebook.

A mass production of the netbooks would be possible between three to nine months, depending on circumstances, two sources familiar with such matters told us. However, as we evaluate the progress of the various OHA projects, we expect conditions for a mass-market netbook to ripen in 2010, rather than in 2009. Right now a variety a of OHA members, announced and unnanounced, are working on projects to set up a sufficient ecosystem.

One important part of the ecosystem would be to have a set of well-functioning applications (an office productivity suite, for example). Google is mostly leaving applications development for Android to third parties (applications which run in the browser like Google Docs being the notable exception). At the rate things are going, we don’t see enough of these third parties developing applications for Android netbooks in the next 12 months. There have been recent predictions about Android netbooks appearing in 2009.

Background

In researching for our Android coverage at VentureBeat, we’ve participated in various Android developer groups and frequently play around with Android to understand some of the issues behind IT. The trigger for us to do the compilation was some news on the Android Porting Google Group. In it, Google developer Dima Zavin claimed a couple of days ago that he ported Android to an Asus EeePC 701. So we decided to have our own go at another Asus netbook.

“Compilation” is a process which needed for a machine such as a PC to be able to use an operating system and understand code. Zavin was compiling Android for a regular Intel CPU, which is what the Asus netbook runs on. The G1 phone, the first commercial mobile phone that Android runs on, however runs on a different processor: the ARM CPU. Taking Zavin’s work as credible, we assumed that compilation wouldn’t take that much time.

Android’s Linux core makes experimental compilations like ours possible. For example, compilations require something called drivers. Drivers are programs which are needed to communicate an operating system like Android with various computer hardware. There are already a lot of Linux drivers, and Linux is able to run on a lot of different computer architectures. Otherwise we’d have needed to build our drivers from scratch.

Android Netbooks coming, but more likely in 2010

We already argued back in August that Android wants to be on any device, not just a phone. Android is designed to run on any device in a category widely referred to as “embedded devices.”

The fact that various OHA partners have already developed Android enough to easily work on our netbook may be considered evidence enough that Google is getting increasing buy-in from industry players to realize this vision. We found two additional indicators that technology is being developed in this direction.

For one, we discovered that Android already has two product “policies” in its code. Product policies are operating system directions aimed at specific uses. The two policies are for 1) phones and 2) mobile internet devices, or MID for short. MID is Intel’s name for ‘mobile internet devices,’ which include devices like the Asus netbook we got Android running on.

The context for our finding can be found here. The important line is this one:

PRODUCT_POLICY

android.policy_phone

android.policy_mid

Another indicator for a coming Android netbook is that Intel already had the right drivers for MID chips in place. You can view some parameter information here.

Overall, we’re impressed with the relative ease of the compilation. Android code is very “portable” and neat. Mainy observers, specifically Symbian supporters, have opined that Android would have problems because of its “open source” nature, leading to “chaotic code” and tendency toward desintegration as developers take the OS in different directions. If true, that could give more controlled OS’s like Symbian, not to mention the iPhone’s, an advantage. Based on our experience with Android, we don’t see that danger mid-term. Quite possibly, Android competitor Symbian does not see that problem either, as the Symbian Foundation also decided to go down an open source path.

Pictures and Observations

After some additional work, the normal webkit browser is working fine on our Asus, and so is the music player. At first, we had problems to get both networking and sound running, though.

The Asus screen size is approximately 5 times bigger than the G1 screen. An adaption of the screen size was not an issue as Android did the adaption automatically.

The open source version of Android does not include Android Market. Therefore we haven’t yet downloaded any apps.

In “Settings,” we stumbled upon the feature “Select locale.” In it, we noticed that the following translations of Android are under way: Czech, German, English (Australia, United Kingdom, Singapore, United States), Spanish, Japanese, German and Dutch. Expect speculation on devices launching in these markets soon.

Original here

Official Fix for the Zune 30 Fail

Microsoft's responded to the Zune 30GB failure, blaming a leap-year handling bug. And they've provided a fix. Which is to wait til New Years, when the bug will go away by itself. Huh.

Microsoft's responded to the Zune 30GB failure, blaming a leap-year handling bug. And they've provided a fix. Which is to wait til New Years, when the bug will go away by itself. Huh.Early this morning we were alerted by our customers that there was a widespread issue affecting our 2006 model Zune 30GB devices (a large number of which are still actively being used). The technical team jumped on the problem immediately and isolated the issue: a bug in the internal clock driver related to the way the device handles a leap year. That being the case, the issue should be resolved over the next 24 hours as the time change moves to January 1, 2009. We expect the internal clock on the Zune 30GB devices will automatically reset tomorrow (noon, GMT). By tomorrow you should allow the battery to fully run out of power before the unit can restart successfully then simply ensure that your device is recharged, then turn it back on. If you're a Zune Pass subscriber, you may need to sync your device with your PC to refresh the rights to the subscription content you have downloaded to your device.

Customers can continue to stay informed via the support page on zune.net (zune.net/support).

We know this has been a big inconvenience to our customers and we are sorry for that, and want to thank them for their patience.

Q: Why is this issue isolated to the Zune 30 device?

It is a bug in a driver for a part that is only used in the Zune 30 device.Q: What fixes or patches are you putting in place to resolve this situation?

This situation should remedy itself over the next 24 hours as the time flips to January 1st.Q: What's the timeline on a fix?

The issue Zune 30GB customers are experiencing today will self resolve as time changes to January 1.Q: Why did this occur at precisely 12:01 a.m. on December 31, 2008?

There is a bug in the internal clock driver causing the 30GB device to improperly handle the last day of a leap year.Q: What is Zune doing to fix this issue?

The issue should resolve itself.Q: Are you sure that this won't happen to all 80, 120 or other flash devices?

This issue is related to a part that is only used in Zune 30 devices.Q: How many 30GB Zune devices are affected? How many Zune 30GB devices were sold?

All 30GB devices are potentially affected.

Ralph Bakshi: Tips on Surviving In Tough Times

Back at Comic Con in 2008 Ralph Bakshi gave an amazing interview on how to survive in tough times. As a creative working person this inspires me a great deal, so I’d like to share some of my take away points from Bakshi’s insights.

But first you have to understand something about Ralph Bakshi: He started his career in the 60s after Disney had passed in both the physical and creative sense. The 30s and 40s were a golden age for theatrical animation, and in the 50s television killed all of that. Also Alfred Hitchcock killed the theatrical short by insisting that there be no cartoons before his film Psycho — the result killed an already pressured animation industry.

By the 60s opportunities looked bleak — the field was already packed with established artists who had payed their dues, and the big companies were in decline. Ralph Bakshi’s solution was brilliant: Instead of dreaming of the past he made his own films that were aimed at adults (example: Fritz the Cat). By doing this Bakshi created a career that lasted into the early 90s while Disney almost went under in the early 80s. So here’s what I’ve learned from Bakshi:

• Tough Times are a Chance to Reinvent an Industry

• Don’t Work for the Big Studios, Work For Yourself

• Technology Allows You To Take on the Big Guys

• Develop New Markets for Your Work

• Creatively Zig When Everyone is Still Zagging

• What’s Been Successful For Years Can Become Stale

And here’s the video for your inspiration:

By the way it should be noted that the animation industry itself hit a high point during the great depression. In the early days of the 20s the industry was crowded with many startup studios, but the 30s thinned the heard and forced the survivors to innovate. It’s out of this period that we see Snow White which was the first full length feature animated film — in a sense Disney reinvented the medium. What’s interesting is that Bakshi sort of acknowledges this when he’s putting down the Disney shorts of the early 30s which were quite dull (Mickey was a much more fun character in his black and white films).

Original hereCraigsphone brings Craigslist to the iPhone

by Mike Schramm on Jan 2nd 2009

Craigslist is one of my absolute favorite sites on the 'net -- it's been around for years, but kept the same simple look and feel, perfectly fulfilling the service of classifieds without ever once going off that course. Sure, there are issues with spam, but Craig and his minions have worked overtime to make the thing work, and it works well (in fact, if you see any weightlifing dumbells for sale in Chicago, let me know, I need some).

There are quite a few iPhone apps featuring Craig and his list out there (including a few with prices on them), but one that caught our eye as a useful free app is Craigsphone, made by Next Mobile Web (they make the very useful Dial Zero app as well). As you can see from the video above, it's all the features of Craigslist made mobile, and then some -- you can see your history, post and call directly from the phone, and even use the iPhone's location to see craigslist entries nearby (though unfortunately, the Nearby features only work in San Fransisco and Manhattan -- no Chicago?). NMW claims they're still working on the app, too -- they want to "take the best local site in the world and make it truly local." Who knows what that means, but it sounds good, right?

If you spend lot of time on Craiglist, or just want to while you're out and about, Craigsphone seems like a good way to do it. We're interested to see what else they've got planned, too.

Original here

Unlocked iPhone Market Gets 3G, But No Threat To Apple (AAPL)

.

.

Is this going to cause all the commotion that the first-generation unlocked iPhone created? Probably not.

that the first-generation unlocked iPhone created? Probably not.

If you recall, about a year ago, some Apple (AAPL) analysts started freaking out about unlocked iPhones that people were buying to hack and use on foreign cellphone carriers. For example, China Mobile said there were 400,000 unlocked iPhones on its network at the end of 2007 -- about 10% of all iPhones sold at that point.

Why all the fuss? The theory was that because Apple didn't have deals with these carriers, they were missing out on potential revenue sharing kickbacks. (In reality, this was never a real problem for Apple, which never lost anything material. Even Apple executives described it as a good problem to have.)

Those concerns died when Apple released the new iPhone 3G, which wasn't available unlocked... Until today.

So will people start freaking out again? Probably not. Why?

- Apple doesn't have revenue sharing deals with most of its new carrier partners. Instead, the carriers now subsidize the cost of the phone, and keep the monthly service revenue. So there's no revenue sharing money for Apple to "lose."

- The iPhone is available in dozens more countries than a year ago. So it's easier to buy an iPhone legitimately around the world -- there's fewer reasons to buy an unlocked one from the U.S. in the first place.

- It's harder to buy an iPhone in the U.S. now. You can't just show up and buy a gadget to unlock -- you are required to sign up for a 2-year contract before you can get your mitts on the hardware. AT&T will eventually sell a no-contract edition of the iPhone for $400 more, but that hasn't happened yet.

- An unlocked iPhone 3G is pretty useless in the U.S. You're still stuck paying for an AT&T contract, and T-Mobile's 3G network uses a different frequency here, which the iPhone can't access. And an iPhone can't use Verizon (VZ) or Sprint (S), unlocked or not.

So at this point, it seems the only people who will use unlocked iPhones are those who live where iPhones aren't on sale yet -- a large but shrinking population -- or people who want to hack them to run on different carriers. We assume this is a small minority of all iPhone buyers.

Apple Researching Gloves for Use with Multi-Touch Devices

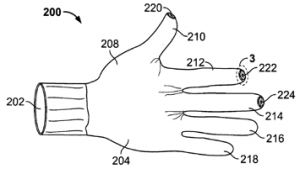

The U.S. Patent & Trademark Office today released a patent application from Apple describing research into gloves that allow the wearer to operate electronic devices. The patent application was filed on June 28th, 2007.

The invention, credited to Ashwin Sunder and Steven Hotelling at Apple, is a cold-weather glove consisting of two layers: a thin inner shell and a heavier outer shell. While Apple clearly intends for the glove to be used in conjunction with Multi-Touch devices such as the iPhone and iPod touch, the patent is written to cover a much broader class of electronic devices.

Apple describes several methods by which the wearer could operate electronic devices while wearing the gloves. One method involves using electrically conductive materials for both the inner and outer shells to allow the signal from the wearer's finger to be transmitted to the input mechanism on the electronic device. Apple acknowledges, however, that the thickness of the outer glove may hinder the tactile response to the wearer. Additional methods include removable caps covering the fingertips, or an elastic ring in the outer shell that would remain nearly closed until pressure from the finger inside the glove forces the ring open and allows the finger to access the device's input mechanism.

While many of the inventions disclosed in Apple's patent applications have yet to appear in commercial products, this application at a minimum demonstrates Apple's awareness of the difficulty of using Multi-Touch devices in cold-weather environments and documents some of their efforts to address the issue. Apple is also certainly not the only vendor to attempt to develop Multi-Touch-compatible gloves, as several other companies, including Dots Gloves, The North Face, and Freehands, have introduced their own solutions.

Original here

10 Mac 911 resolutions

Your packet of 10 Mac 911 resolutions for the new year.

• I resolve to back up my data. Regularly. Thoroughly.

• I resolve to purchase a copy of Alsoft’s Disk Warrior if I haven’t already, because I understand that it will save my bacon should my Mac experience the worst sort of low-level corruption.

• I resolve to seriously consider purchasing AppleCare for my new Mac because Macs, like anything, break, and some of those breaks can cost a small fortune. Much as I view extended warranties with suspicion, AppleCare is often a good investment.

• I resolve that if I’m going to open up an expensive hunk of hardware with the notion of improving it in some way, I’ll have the proper tools at hand (and this means more than a Swiss Army Knife) and a clear enough appreciation of my true skills that, if necessary, I can back out before I do The Bad Thing.

• I resolve to be polite when speaking with any tech support person because I understand that my problems were not caused by the person I’m speaking with.

• I resolve to sit in a healthy position when working at my computer and get up and walk around every so often because I don’t want to be mistaken for Quasimodo when I’m 42.

• I resolve to responsibly panic when my Mac’s hard drive begins to squeak and take immediate action along the lines of backing up my data and obtaining another drive from which I can boot my Mac.

• I resolve to not repair permissions on each day with a Y in its name because I mistakenly believe that it’s like giving your Mac a daily vitamin.

• I resolve to tag and rate my media—photos and music—when I first import it with the idea that two years from now I might want to find it.

• I resolve to rein in any condescension and smugness when talking computers with a PC user, understanding that not only do I not want to be one of those people, but also that my attitude may prevent a fellow human being from moving to a Mac for fear that they’d become one of those people.

Mac web share nears 10% in December

In spite of fears of a late-year plunge, Apple has again beat its own market share record in December and now has a record 9.6 percent of web traffic as Microsoft's own influence continues to fall.

Net Applications' December results show Mac OS X surging from just under 8.9 percent in November to the new 9.6 percent mark for the tens of thousands of sites monitored by the web tracking firm.

The figure is an all-time high for Apple and a significant jump from the same period a year before, when the Mac maker held 7.3 percent.

Its iPhone also made significant inroads and claimed 0.44 percent versus 0.37 percent the previous month, and just 0.12 percent in December 2007. The handset still claims the title of the most popular non-desktop operating system and now has more than half the market share of Linux.

Apple's success during the holiday month, as with the month before, once again comes directly at Microsoft's expense. December represented the second month in a row where Windows had less than 90 percent and dropped nearly a full point to 88.7 percent; both Apple's computers and cellphones were responsible for much of the erosion of Windows' share.

Net Applications does caution that December can potentially skew the results. As more people are staying at home or are on vacation, the researchers note, users are more likely to be running Macs and iPhones than the Windows PCs that still rule the business world.

However, Apple has historically maintained or grown its share following the holiday spike and often uses the season as a platform for further gains.

Original here